Future Editor 2

2005-2006

Future Editor (the first) had grown larger and delivered far more than I ever anticipated when I first created it. I decided to start over again and do things right from the start with all the new things I had learned. Doing things right takes time though which is why this project never came far when implementing the magnitude of the feature span of Future Editor. However; when it comes to depth and extensibility it came much further than its predecessor.

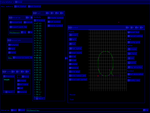

User interface

The GUI framework itself is flexible with frames that can be moved and resized. General components and controllers are used to edit parameters. It is still possible to do all interaction using the keyboard but mouse interaction is also possible. Static parts of the GUI are cached in the graphics card which make the GUI itself render superfast.

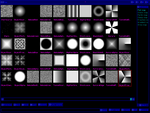

Map generation

The mayor complete feature in the editor is 2D map and image generation from a graph of parametrized filters and noise generators. Maps are generated with a functional approach which makes it easy to combine different maps and reduces the need to track updates throughout the graph when changes are made. Animations were another big reason for the decision to abandon raster representation. The drawback of not having a raster representation is obviously performance. I planned to make intermediate raster representations to optimize map generation but never got to it.

Text

Curve based fonts is another feature that can be used to add text to maps. It was tricky to do with the sampled approach since there is no analytical way to calculate the distance from a point to a cubical spline to determine if the sample is within a glyph or not. I used numerical methods to solve the problem. They were stable but unfortunately not very fast.

Animation

The animation system is event based. What is interesting though is that the event handlers were executed in a small stack based virtual machine. It has merely seven simple instructions. Six of them handle program flow. The seventh dispatch calls to external, predefined, handlers that manipulate data on the stack and interact with the resources to be animated.

Event handlers are implemented in a high-level language with C-like syntax. Functions are compiled to an intermediate XML-based assembly language for the virtual machine (this was essential for debugging). Finally a more slim binary representation, byte code, are generated ready to be executed when an event occurs.

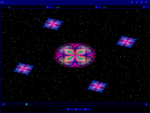

As an example this is the script that controls all the effects in a demo of the animation system I made: FE2Demo1.fes. Here is a low resolution rendering of the demo: